Oregon Public Broadcasting recently splashed a strange story across its web page under this rather sinster-sounding headline…

Oregon dropping artificial intelligence tool used in child abuse cases

…which reported…

Child welfare officials in Oregon will stop using an algorithm to help decide which families are investigated by social workers, opting instead for a new process that officials say will make better, more racially equitable decisions….

The story was an ill-disguised plug for one of our senators from 35,000 feet, in this case Ron Wyden…

…a chief sponsor of a bill that seeks to establish transparency and national oversight of software, algorithms and other automated systems.

“With the livelihoods and safety of children and families at stake, technology used by the state must be equitable — and I will continue to watchdog,” Wyden said.

Ahhh, yes! Our old friend, somewhere beyond the ever-receding horizon…

“Equity!”

The senator’s ire was raised to fever-pitch by an expose from the Associated Press, which purported to investigate an algorithm used by Child Welfare bureaucracies to screen out calls reporting child abuse. If you read it, you might suspect that our senator didn’t. Nor did Oregon Public Broadcasting.

The AP focused on…

…an opaque algorithm whose statistical calculations help social workers decide which families should be investigated in the first place.

…and hung the story around the neck of…

Allegheny County [PA], the cradle of Mister Rogers’ TV neighborhood [which]uses data to support agency workers as they try to protect children from neglect.

Then the focus widened…

From Los Angeles to Colorado and throughout Oregon, as child welfare agencies use or consider tools similar to the one in Allegheny County, Pennsylvania, an Associated Press review has identified a number of concerns about the technology, including questions about its reliability and its potential to harden racial disparities in the child welfare system.

We’ll get to that “harden” stuff in a moment. But note the slushy qualifiers in this nut-graf. It’s the first steps in a journalistic tap-dance.

Start with “neglect.” To its credit, the AP notes (unlike Wyden or OPB) that…

That nuanced term can include everything from inadequate housing to poor hygiene, but is a different category from physical or sexual abuse, which is investigated separately in Pennsylvania and is not subject to the algorithm.

Oops: the nasty algorithm doesn’t touch the most serious cases.

But AP quickly pats itself on the back, and reveals that this is, in essence, a one-source story (which, in AP’s former incarnation, was a big no-no):

According to new research from a Carnegie Mellon University team obtained exclusively by AP, Allegheny’s algorithm in its first years of operation showed a pattern of flagging a disproportionate number of Black* children for a “mandatory” neglect investigation, when compared with white children. The independent researchers, who received data from the county, also found that social workers disagreed with the risk scores the algorithm produced about one-third of the time.

This doesn’t stop the AP from saying…

Advocates worry that if similar tools are used in other child welfare systems with minimal or no human intervention–akin to how algorithms have been used to make decisions in the criminal justice system–they could reinforce existing racial disparities in the child welfare system.

Shall we count the “coulda’s” in this piece of narrative? And what, exactly, are the “existing racial disparities”? AP never bothers to answer.

Nor did this aside stop the presses…

Because family court hearings are closed to the public and the records are sealed, AP wasn’t able to identify first-hand any families who the algorithm recommended be mandatorily investigated for child neglect, nor any cases that resulted in a child being sent to foster care.

If you skin the “he said/she said” stuff from the bottomless story, you find that it really centers on something dear to the progressive narrative, our old friend…

Disparities

…which rests on the core progressive belief that if there are 10 percent POCs in any given sample of humanity, then there simply cannot be, let us say, 12 percent of problems in that group attributable to anything other than rank systemic racism.

There is, of course, no upward-limit on, say, political office holders, as Portland has proved; but anything on the downside must not be accounted as a real number.

Do not ask these people to balance your checkbook.

And what about that academic study that somehow found its way into the hands of the AP reporters? You can follow the hotlink above (OPB didn’t bother with that); but it’s a heavy lift, dense with the opaque language favored by professors…

First, we excluded all referrals not marked General Protective Services (GPS) by deleting all data entries where the variable REFER_TYPE_GPS_NULL was not 1.

But here’s the grand hoo-ha:

Particularly, the Allegheny Family Screening Tool on its own would have made more racially disparate decisions than workers, and workers used the tool to decrease those algorithmic disparities.

Give that a moment’s thought: we’re back to the progressive orthodoxy that any “group” or capitalized “race” simply cannot have an excess of problems—such as child neglect—greater than its proportion of the population. Thus…

Disparities

Please note that humans in Pittsburgh are arbitrarily changing the computer’s scores. Frequently.

Why?

Carnegie-Mellon** isn’t interested in why this might exist…or if Allegheny bureaucrats, well aware of the price to be paid for “disparities” aren’t cooking the books. All the authors have to say (in boldface) is…

This second result is interesting and surprising, given that child welfare workers are known to make racially disparate decisions without algorithms.

“Known by” who? Don’t ask.

So, for the benefit of OPB and our senator, let’s review:

Because an algorithm that AP has never seen and that is applied to only the least-serious cases, and that produces a mathematic result that doesn’t square with progressive orthodoxy, and can be manipulated at will by child welfare bureaucrats in remote Pittsburgh, the senator…

…reached out to the [Oregon Human Services] department again following the AP story to ask questions about racial bias…

…and because this “reach out” was going to burn some bureaucratic fingers, the state’s Department of Human Services turned off the computers. Bravo for the senator, who is about as far removed from the realities of kids getting mistreated by adults as is possible. But he got his quotes. OPB got its scare-headline.

The kids at risk? Forgotten.

So what comes next?

OPB made passing reference to a new system that will replace the old algorithm, something called…

…the Structured Decision Making model, which aligns with many other child welfare jurisdictions across the country.

(Which neglects to mention AP’s point that the nasty racist algorithm is also widespread “across the country.”)

And what is this cure-all for “disproportion” and other sins?

First, it’s a copyright tool—the name should run with that little ®. It is owned by a non-profit, Evident Change (formerly the National Council on Crime and Delinquency, based in Oakland, CA), which says—ready for it?

We promote just and equitable social systems for individuals, families, and communities through research, public policy, and practice.

One of the ways they do this is through getting various public-welfare bureaucracies on board with their Structured Decision Making® (SDM) “tool,” which can be bolted on to programs for juvenile delinquents, sex-offenders, courts…you name it, they’ve got it.

Their web site studiously avoids the question: Does Evident Change get paid for providing this tool? If not, this would be a first for Oregon’s largesse with non-profits.

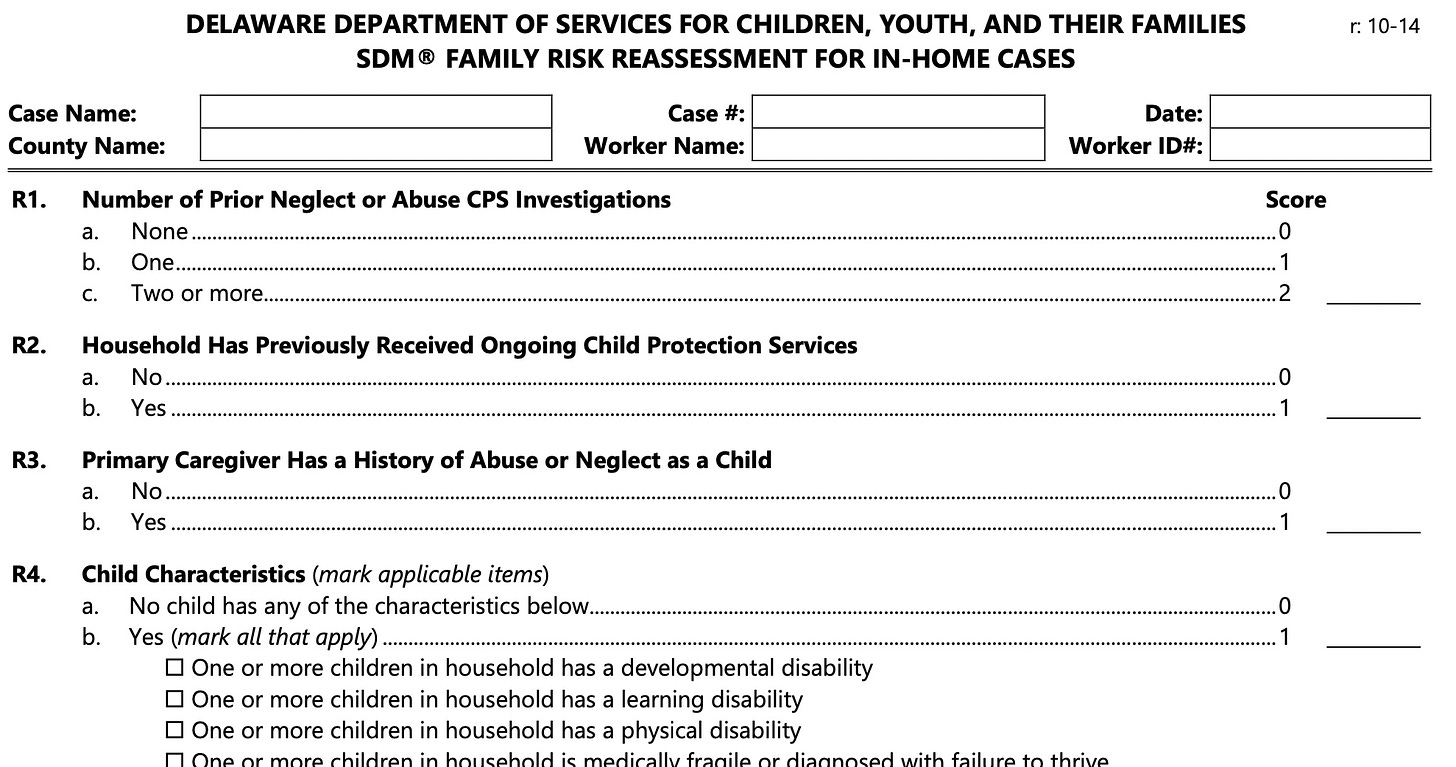

If the Allegheny algorithm is hidden from human eyes, what about the SDM® tool?

There are no samples of the actual “tools” anywhere on the non-profit’s web site but dig around—God bless DuckDuckGo—and you’ll find some examples. For instance, the manual for the Delaware Division of Family Services, where…

Structured Decision Making® (SDM) screening assessment is used for all reports.

It is a mighty tome—216 pages—of step-by-step stuff and mind-numbing detail…

It is actually an algorithm…just without the computer. It would take hours for any halfway-competent social worker to check off the hundreds of boxes and the construction of “decision trees” that would—one can hope—arrive at an actual decision. Which, of course, algorithms are designed to do—just faster.

The state of Oregon, understandably, doesn’t want anyone (most of al Sen. Wyden) to see the SDM® “tool” for the screeners sitting in a phone bank in Salem, working with people concerned enough to get in touch with the state. But, like little humanoids, the people answering the calls will be certain to ask a myriad of canned, pre-cut questions as they work their way through the SDM® script. To be followed, if the right check-boxes are ticked, by other social workers who will, in turn, be running another SDM® script and constructing elaborate “decision trees.”

A cynic might look at this and get the strange feeling that this is really just a bureaucratic CYA exercise.

“See, Senator, we filled-out the SDM® forms!”

But…in the midst of all of the checkboxes, and fill-in-the-blanks, and decision-trees where does the payoff…

Equity!

…come in?

When the rubber meets the road, what will it look like? Pols like Wyden will never tell you; it’s too esoteric and inside-the-beltway for the doofuses back home.

Nor will the hapless guy—Fariborz Pakseresht, who runs the Department of Human Services—ever let us in on the big secret.

It’s the great wink-wink, nod-nod of Oregon politics.

This leads to various distortions, which the people of Portland live with daily.

If you are, for example, a teacher in the public education factories, where the kiddies essentially went feral after being stuck at home for two years, you know that “equity” means, “Lay off the POCs.” Better to shift to a “bribe the kids to behave” system, heavily padded with SDM®-style paperwork.

GuvKate answered the “equity” question by doing away with testing kids to see if they had actually learned enough to get a high school diploma.

Cops? If you’re hanging on until your pension kicks in (or you haven’t found a slot in the suburbs) it means “Back off,” and watch as the folks in the “community” start shooting each other.

Media? Think you’ll ever read a story in the Oregonian or Willy Week or the Tribune about the epidemic of black-on-black crime?

And that will be a problem for the Oregon Department of Human Services. Pity the hapless intake worker, plugged into the horrors visited upon children, knowing that the numbers will be closely inspected for the slightest smidgen of “disproportion.” How to get those numbers tamped down so that the social workers won’t be bushwhacked by the AP or Sen. Wyden or OPB, or any of the other boo-birds hiding behind “equity?”

Essentially, you will massage the numbers. You will have a powerful incentive to lie.

But here’s the real question: Suppose the “disproportion” numbers are real and not an artifact of an insidious white-systemic-racist plot? What then?

Think Sen. Wyden wants to deal with that?

Child abuse is real; equity is an abstract concept. Kids get hurt; will government protect them, or its bureaucratic keester?

You know the answer.

But, might we at least do kids the favor of accurately reporting how many of them are being abused? And not fudge the figures?

*(Nice to see that the AP is following its own style-book, which mandated capitalizing Blacks during the Floyd Riots.)

**Amazing that the university retains the names of two of the Gilded Age’s most notorious robber-barons.

The victimology here almost seems as if it emerged from the Old Testament. Children victimized by adults may well have their human needs assessed according to the skin color of the perpetrator. It's one thing to have human worth determined by some arcane standard of tribal justice but something else entirely when that justice only matters in relation to some formula where parity among racial groups is the goal. Anti-racism ultimately becomes so arbitrary that the compassionate care afforded depends less on the facts of the case than the demand for a predetermined conclusion. Does this qualify as pre-modern or post-modern?

I'm not sure how society ever rescues itself from the rabbit-holes of dialectical victimhood. Rabbinical judges might help but even they will necessarily consume their own humane instincts for the sake of something that is primitive and incomprehensible. Everything old is new again.

If Presearch isn't on your radar you might want to give it a try. DuckDuckGo has begun de-prioritizing results with "misinformation", although still pretty useful (for now).